A monthly review of climate, energy, environmental, and political policy issues

Articles compiled by Jonathan DuHamel wryheat@cox.net

ATTEMPTED POWER GRABS

The highlight of December was the U.N. Climate Change Conference, known as COP28 which was held in Dubai, United Arab Emirates (a center of oil and gas production). Its goal was to eliminate fossil fuel use in the western world even while China and India are building more coal-fired electric generating facilities. (Read more). ☼

In January, the big deal for “elites” was the World Economic Forum (WEF) held from Jan. 15 to 19 in Davos. Switzerland. The conference included leaders from various industries and nations, celebrities and billionaires. Davos famously draws criticism for promoting a green agenda, as reports claimed up to 1,000 private jets carried conference goers to the meeting. (Read more).☼

WEF says world’s greatest threat is “Misinformation” — (The biggest threat to experts and billionaires is free speech) (link) ☼

After Years Of Spewing Disinfo, The New World Order At Davos Wants Your Trust (link) ☼

Out-Of-Touch Davos Elites Insist Disinfo On ‘The Science’ No. 1 Global Threat (link) ☼

More scary stuff from WEF:

World Health Organization (WHO) Director-General Tedros Ghebreyesus has called on countries to sign on to the health organization’s pandemic treaty so the world can prepare for “Disease X.” Disease X is a hypothetical “placeholder” virus that has not yet been formed, but scientists say it could be 20 times deadlier than COVID-19. It was added to the WHO’s short list of pathogens for research in 2017 that could cause a “serious international epidemic,” according to a 2022 WHO press release. (Read more) ☼

CLIMATE SCIENCE BACKGROUND:

by Jonathan DuHamel

Geologic evidence shows that Earth’s climate has been in a constant state of flux for more than 4 billion years. Nothing we do can stop that. Much of current climate and energy policy is based upon the erroneous assumption that anthropogenic carbon dioxide emissions, which make up just 0.1% of total greenhouse gases, are responsible for “dangerous” global warming/climate change. There is no physical evidence to support that assumption. Man-made carbon dioxide emissions have no significant effect on global temperature/climate. In fact, when there is an apparent correlation between temperature and carbon dioxide, the level of carbon dioxide in the atmosphere has been shown to follow, not lead, changes in Earth’s temperature. All efforts to reduce emissions are futile with regard to climate change, but such efforts will impose massive economic harm to Western Nations. The “climate crisis” is a scam. U.N officials have admitted that their climate policy is about money and power and destroying capitalism, not about climate. By the way, like all planetary bodies, the earth loses heat through infrared radiation. Greenhouse gases interfere with (block) some of this heat loss. Greenhouse gases don’t warm the Earth, they slow the cooling. If there were no greenhouse gases, we would have freezing temperatures every night. Submarine volcanoes must be considered when evaluating the causes of climate change. Usually, these changes are regional, not global, however they may have global influences such as changing precipitation patterns as seen with the El Niño Southern Oscillation (ENSO).

“Civilization exists by geological consent, subject to change without notice.” – Will Durant

For more on climate science, see my Wryheat Climate articles:

A Review of the state of Climate Science

The Broken Greenhouse – Why Co2 Is a Minor Player in Global Climate

A Summary of Earth’s Climate History-a Geologist’s View

Problems with wind and solar generation of electricity – a review

The High Cost of Electricity from Wind and Solar Generation

The “Social Cost of Carbon” Scam Revisited

ATMOSPHERIC CO2: a boon for the biosphere

Carbon dioxide is necessary for life on Earth

Impact of the Paris Climate Accord and why Trump was right to drop it

Six Issues the Promoters of the Green New Deal Have Overlooked

Why reducing carbon dioxide emissions from burning fossil fuel will have no effect on climate ☼

CLIMATE ARTICLES FOR JANUARY

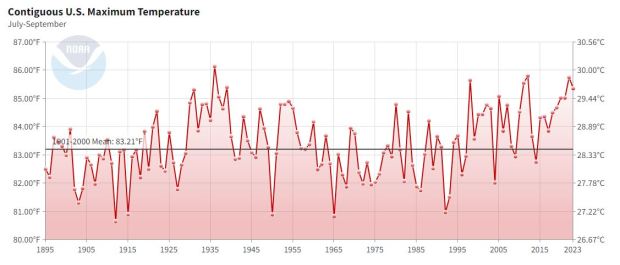

U.S. Climate 2023 Year in Review – In one word: NORMAL (link) ☼

2023 US wildfire season sees total acreage burned under 3 million, far below 10-year avg. & lowest since 1998 (link) ☼

Paleoclimate Reconstructions Continue To Document A Much Warmer-Than-Today Holocene (link) ☼

Beijing records longest cold wave in modern history (link) ☼

Antarctic Sea Ice Volume Greater Than The Early 1980s (link) ☼

Impacts and risks of “realistic” global warming projections for the 21st century

by Nicola Scafetta. This paper demonstrates that realistic emissions scenarios and climate sensitivity values & scenarios of natural climate variability produce more realistic, non-alarming scenarios of 21st century climate. (link) ☼

61 Articles On Studies, Datasets From 2023 Show Climate Models Are Rubbish (link) ☼

Collapsing Antarctic Scare Narrative…4 NEW Papers Find Antarctic Ice Is MORE STABLE Than Thought (link) ☼

An Egregious Failure of Scientific Integrity (link) ☼

CLIMATE DYSTOPIA: How Life Would Get Worse If Climate Alarmists Carry Out Their US Agenda (link) ☼

Climate Alarmist Claim Fact Checks by Joseph D’Aleo, CCM, Jan 17, 2024 (link) ☼

Nearly 160 Scientific Papers Detail The Minuscule Effect CO2 Has On Earth’s Temperature (link) ☼

New Study Concludes ‘CO2 Can Have No Measurable Effect On Ocean Temperatures’ (link) ☼

Unpacking Climate Lies (link) ☼

Global Warming: Observations vs. Climate Models

by Dr. Roy Spencer

Warming of the global climate system over the past half-century has averaged 43 percent less than that produced by computerized climate models used to promote changes in energy policy. In the United States during summer, the observed warming is much weaker than that produced by all 36 climate models surveyed here. While the cause of this relatively benign warming could theoretically be entirely due to humanity’s production of carbon dioxide from fossil-fuel burning, this claim cannot be demonstrated through science. At least some of the measured warming could be natural. Contrary to media reports and environmental organizations’ press releases, global warming offers no justification for carbon-based regulation. (Read full paper) ☼

Astrophysicist Nukes The Entire ‘CO2 Drives Climate Change’ Narrative

by Vigilant Fox

“The whole problem of this global warming is a complete nothing,” Dr. Willie Soon told Tucker Carlson. He says he’s 90% sure the sun, not carbon dioxide, is causing climate change and that the climate czars are so out of their minds that they are misleading the public. (Read more and watch video) ☼

Related from the Jo Nova blog: “They didn’t tell us, the term “fossil fuels” might be wrong too.

Dr Willie Soon unleashes on the failures of climate change and modern science for 40 minutes with Tucker Carlson. As an opening he explained how one of Saturn’s moons has more liquid fuel than all the oil and gas deposits of Earth, which rather pokes a hole in the idea that fossil fuels are only ever made from fossils.” (Read more) ☼

New Study Finds No Evidence Of A CO2-Driven Warming Signal In 60 Years Of IR Flux Data

by Kenneth Richard

“The real atmosphere does not follow the GHG [greenhouse gas] GE [greenhouse effect] hypothesis of the IPCC.” – Miskolczi, 2023

CO2 increased from 310 ppm to 385 ppm (24%) during the 60 years from 1948 to 2008. Observations indicate this led to a negative radiative imbalance of -0.75 W/m². In other words, increasing CO2 delivered a net cooling effect – the opposite of what the IPCC has claimed should happen (Miskolczi, 2023).

Also, there is “no correlation with time and the strong signal of increasing atmospheric CO2 content in any time series,” which affirms “the atmospheric CO2 increase cannot be the reason for global warming.” (Read more) ☼

Models, Myths, And Misinformation Undergird Climate Models And Energy Policy

by Paul Driessen

It’s mystifying and terrifying that our lives, livelihoods, and living standards are increasingly dictated by activist, political, bureaucratic, academic, and media elites who disseminate theoretical nonsense, calculated myths, and outright disinformation.

Not only on pronouns, gender, and immigration – but on climate change and energy, the foundation of modern civilization and life spans.

We’re constantly told the world will plunge into an existential climate cataclysm if average planetary temperatures rise another few tenths of a degree from using fossil fuels for reliable, affordable energy, raw materials for over 6,000 vital products, and lifting billions out of poverty, disease, and early death.

Climate alarmism implicitly assumes Earth’s climate was stable until coal, oil, and gas emissions knocked it off-kilter, and would be stable again if people stopped using fossil fuels.

In the real world, climate has changed numerous times, often dramatically, sometimes catastrophically, and always naturally. (Read more) ☼

What Is Climate?–Richard Lindzen

Read this essay and look at the figures (link). Lindzen points out that: “Averaging Mt. Everest and the Dead Sea makes no sense. Instead, we average what is called the temperature anomaly. We average the deviations from a 30-year mean. The figure shows an increase of a bit more than 1°C over 175 years. We are told by international bureaucrats that when this reaches 1.5°C, we are doomed. In all fairness, even the science report of the UN’s IPCC (i.e. the WG1 report) and the US National Assessments never make this claim. The political claims are simply meant to frighten the public into compliance with absurd policies. It remains a puzzle to me why the public should be frightened of a warming that is smaller than the temperature change we normally experience between breakfast and lunch….Earth has dozens of different climate regimes…at any given time, there are almost as many [weather] stations cooling as are warming.” ☼

ENERGY ISSUES

Coal’s Life-Saving Role Ignored By Climate-Obsessed Media

by Vijay Jayaraj

Billions of people all over the world do not have access to secure sources of heat and electricity. For these, winter can be a death blow. A political war against fossil fuels is making matters worse for those unprotected from frigid temperatures. Winter’s icy chill claims far more lives than scorching summer heat, according to global analyses of fatalities caused by various natural hazards. In fact, a 2023 health study conducted across 854 European cities reveals that an estimated annual excess of 203,620 deaths were due to cold while just 20,173 were attributed to heat. In comparative terms, only 1 in 10 excess deaths from extreme temperatures were attributable to heat while a majority were due to cold. (Read more) ☼

Energy at a Glance: Ethanol and Biodiesel

from the Heartland Institute

Biofuels produce more harm than benefits after accounting for their negative impacts on fuel economy, air quality, land use, and food prices.

Quick Bullets:

Ethanol has a lower energy density than gasoline, which means vehicles get fewer miles per gallon.

Per unit of equivalent energy, ethanol produces more carbon dioxide (CO2) than normal gasoline.

Ethanol fuels produce more nitrogen oxides (NOx) and other air pollutants, which contribute to worsening air pollution, especially in summer months

(Read more – read PDF version) For other topics from Energy at a Glance go here.☼

The Cult of Darkness

by Edward Hudgins

“Energy is not for conserving; it is for unleashing to serve us, to make our lives better, to allow us to realize our dreams and to reach for the stars, those bright lights that pierce the darkness of the night.” (Read more) ☼

New report highlights Green failure in Europe and warns America (link) ☼

Today’s Materialistic World Cannot Survive Without Crude Oil

by Ronald Stein, Heartland Institute

The elephant in the room that no one wants to discuss is that crude oil is the foundation of our materialistic society as it is the basis of all products and fuels demanded by the 8 billion on this planet.

As a reality check for those pursuing net-zero emissions, wind and solar do different things than crude oil. Unreliable renewables, like wind turbines and solar panels, only generate occasional electricity but manufacture no products for society.

Crude oil is virtually never used to generate electricity but, when manufactured into petrochemicals, is the basis for virtually all the products in our materialistic society that did not exist before the 1800s being used at these infrastructures like transportation, airports, hospitals, medical equipment, appliances, electronics, telecommunications, communications systems, space programs, heating and ventilating, and militaries.

Most importantly, today, there is a lost reality that the primary usage of crude oil is NOT for the generation of electricity but to manufacture derivatives and fuels, which are the ingredients of everything needed by economies and lifestyles to exist and prosper. (Read more) ☼

California’s War On Fossil Fuels Is Having Less-Than-Stellar Consequences

by Nick Pope

California has gone after the fossil fuel industry with vigor, but those efforts do not seem to have made much impact on climate change while proving detrimental to the state’s economy. California’s anti-fossil fuel push also has not moved the needle much on climate change, which alarmists continue to insist is accelerating at a dangerous pace, but it has raised energy costs for Californians, diminished grid reliability, and disincentivized corporate investment that would create or maintain jobs in the state. (Read more) ☼

A Federal Power Grid Would be Everyone’s Worst Nightmare (link) ☼

Caving To Climate Alarmists, Biden Jams Brakes On Massive Natural Gas Projects (link) ☼

ELECTRIC VEHICLES

The Electric Car Con Explained

By William Levin

Is electricity a source of energy? Most people will answer yes, which is incorrect. Electricity carries energy but it is not itself a source of energy, which in the U.S. is supplied 60% by natural gas and coal, 18% nuclear and 22% renewables (hydro, solar and wind).

The related question is whether cars are a major consumer of energy and hence a significant contributor of Co2 emissions? Again, most people believe both statements are self-evidently true, hence the importance of moving to electric cars.

In fact, cars (light-duty transportation) account for less than 5% of global energy demand, with U.S. cars accounting for 19% of the global car fleet, declining to under 15% by 2050 as car demand grows faster outside the U.S.

Putting these facts together, and they are indisputable facts, provides a stunning insight.

The U.S. car fleet accounts for a mere 1.0% of global energy demand (5% x 19%), declining to 0.8% by 2050. So even if the U.S. shifts 100% to electric-powered cars, the maximum climate impact in 2050 is a meaningless 0.2% (22% x 0.8%) reduction in global Co2 emissions from the current electric grid, up to a maximum of 0.5% assuming solar, wind, and hydro can, implausibly, power 60% of electric demand.

In other words, there is no factual basis to claim that the government mandate to switch to electric cars will have any material impact on global Co2 emissions. (Read more) ☼

EV’s Manganese Future

by Pat Risner

Manganese, a federally designated critical mineral and one of five minerals to earn increased focus under the Defense Production Act in 2022, is emerging as a potential gamechanger in the future of Evs. The problem: the United States has not actively mined manganese in fifty years, and more than 90% of the current market for manganese processing is currently done in China.

In Southern Arizona, we are looking to change that.

South32’s Hermosa project, located in a historic mining district in the Patagonia Mountains, is currently the only advanced mine development project in the United States that could produce two federally designated critical minerals—manganese and zinc. Both are critical to the clean energy transition. (Read more) ☼

Freeze Frame: Cold Weather Shuts Down Evs (link) ☼

‘Bunch Of Dead Robots’: Charging Stations Turn Into EV Graveyards Thanks To Frigid Temps (link) ☼

ENVIRONMENT

Lake Mead Recovery Stuns Climate Change Zealots

by Rick Manning

The water levels of Lake Mead had dropped more than 45 feet in the three years from January of 2020 to December of 2022. Knowing ‘scientists’ worried that the Lake could approach ‘dead pool’ levels in the near future ending the electricity generation from the dam. A catastrophic event for the Las Vegas and Phoenix population centers.

But something unexpected happened in 2023. It rained and snowed a lot in the California Sierra Nevada mountain range, but more significantly, the Rocky Mountains in Utah and Colorado also received above average rainfall and snow — increasing run off throughout the year. Now, Lake Mead’s water level is a whopping 23 feet higher than a year prior, cutting the effects of the three-year drought in half. (Read more) ☼

ECONOMY

MINERAL COMMODITY SUMMARIES 2023

Source U.S. Geological Survey:

https://pubs.usgs.gov/periodicals/mcs2023/mcs2023.pdf

Arizona was #1 in production of cement, copper, molybdenum, mineral concentrates, sand and

gravel (construction), stone (crushed). Value: $10.1 billion.

Arizona was the leading copper-producing State and accounted for approximately 70% of

domestic output.

Domestic gemstone production included agate, beryl, coral, diamond, garnet, jade, jasper, opal, pearl, quartz, sapphire, shell, topaz, tourmaline, turquoise, and many other gem materials. In descending order of production value, Arizona led the Nation in natural gemstone production,

followed by Oregon and Nevada.

The top 10 ranked States (based on total value including withheld values) were, in descending order of production value, Arizona, Nevada, Texas, California, Minnesota, Alaska, Florida, Utah, Michigan, and Missouri. ☼

STATE OF THE UNION

These 22 States Urge High Court to Allow Biden Admin, Big Tech to Censor Online Speech

(link)

Commentary: Biden Admin Wants To Promote Green Energy By Destroying 4 Hydroelectric Dams (link)

Past Time to Undo Obama’s ‘Fundamental Transformation’

by Clarice Feldman

Two decades of Obama policies have led to wars in the Middle East, the destruction of our educational systems, the danger to our lives from poor management, and a substantially weakened military capability. (Read more) ☼

Naked Power: Now Gov Wants to Regulate Our Clothes — in Climate’s Name (link) ☼

Biden admin failing to track Chinese ownership of US farmland: govt watchdog (link)

“This report confirms one of our worst fears: that not only is the USDA unable to answer the question of who owns what land and where, but that there is no plan by the department to internally reverse this dangerous flaw that affects our supply chain and economy,” Congressional Western Caucus Chairman Dan Newhouse, R-Wash., said. “Food security is national security, and we cannot allow foreign adversaries to influence our food supply while we stick our heads in the sand.” ☼

Can Texas Constitutionally Engage in War and Protect Itself From Imminent Danger?

by Joe Wolverton, II, J.D.

“No State shall, without the Consent of Congress … engage in War, unless actually invaded, or in such imminent Danger as will not admit of delay.” — Article I, Section 10, Clause 3 of the U.S. Constitution

“As each state will expect to be attacked and wish to guard against it, each will retain its own militia for it own defense.” — James Madison, speech at the Virginia Ratifying Convention, June 16, 1788

“This is not over. Texas’ razor wire is an effective deterrent to the illegal crossings Biden encourages. I will continue to defend Texas’ constitutional authority to secure the border and prevent the Biden Admin from destroying our property.”

That’s the message sent via X (formerly Twitter) by Texas Governor Greg Abbott. (Read more) ☼

——————————————————————————————-

“I think we have more machinery of government than is necessary, too many parasites living on the labor of the industrious.” —Thomas Jefferson (1824)

“No statement should be believed because it is made by an authority.” – Robert A. Heinlein

END